⚡ Quick Reference: When to Use What

What Is Load Balancing?

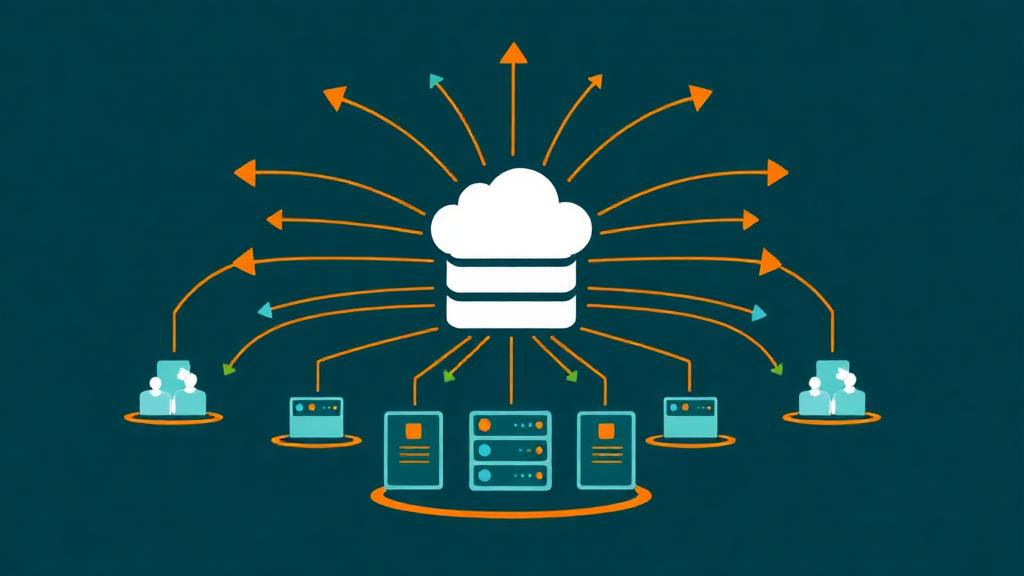

A load balancer is a server (or service) that sits between your users and your backend servers. When a visitor requests your website, the load balancer decides which backend server should handle that request—distributing traffic evenly so no single server gets overwhelmed.

Think of it like a restaurant host seating guests: instead of sending everyone to the same table, the host distributes diners across all available tables so each waiter handles a manageable workload.

🔄 Traffic Flow With a Load Balancer

DNS resolves to the load balancer's IP address (not a backend server directly). The user never knows multiple servers exist.

Checks which backend servers are healthy (via health checks). Applies the configured algorithm to pick the best server.

The load balancer forwards the request with headers (X-Forwarded-For, X-Real-IP) so the backend knows the original client.

The chosen server processes the request (runs PHP, queries database, renders page) and sends the response back through the load balancer.

The user receives the response. The entire process adds 1-3ms of latency—imperceptible to humans.

Why You Need Load Balancing

High Availability

If one server crashes, the load balancer automatically routes traffic to healthy servers. Zero downtime for users—they don't even notice.

Better Performance

Distributing requests across multiple servers means each server handles fewer requests, resulting in faster response times for everyone.

Horizontal Scaling

Need more capacity? Add another server behind the load balancer. Scale from 2 to 20 servers without changing your architecture.

Zero-Downtime Deploys

Update one server at a time while the others serve traffic. Rolling deployments mean your site never goes offline for maintenance.

DDoS Mitigation

Load balancers can absorb and distribute attack traffic, rate-limit suspicious IPs, and protect backend servers from direct exposure.

Geographic Distribution

Global load balancers route users to the nearest server cluster, reducing latency for visitors worldwide.

How Load Balancing Works

Load balancing happens at different levels of the networking stack. The load balancer inspects incoming requests and uses an algorithm to decide which backend server should handle each request. It continuously monitors backend health and removes unhealthy servers from the pool.

🏗️ Key Components

The IP address and port the load balancer listens on. Typically port 80 (HTTP) and 443 (HTTPS). This is what your DNS points to.

The group of servers that handle requests. Each server has an IP, port, and optional weight. Servers can be added or removed dynamically.

Periodic probes sent to each backend (HTTP GET, TCP connect, or custom script). Unhealthy servers are removed from the pool until they recover.

The rule that determines which backend handles each request: Round Robin, Least Connections, IP Hash, Weighted, or Random.

Optional: ensures a user's requests always go to the same backend. Required for apps that store session data in server memory.

Load Balancing Algorithms

| Algorithm | How It Works | Best For | Complexity |

|---|---|---|---|

| Round Robin | Sends requests to each server in rotation: A→B→C→A→B→C | Equal servers, general use | Simple |

| Weighted Round Robin | Like Round Robin but servers with higher weights get more requests (2:1 ratio) | Mixed server capacities | Simple |

| Least Connections | Sends to the server with fewest active connections right now | Variable request durations | Medium |

| Weighted Least Conn. | Least connections adjusted by server weight/capacity | Mixed servers + variable load | Medium |

| IP Hash | Hashes client IP to always route same client to same server | Session persistence (sticky) | Simple |

| Least Response Time | Sends to the server with fastest average response time | Performance-critical apps | Medium |

| Random | Selects a random backend server for each request | Large server pools (50+) | Simple |

| Resource-Based | Routes based on server CPU/memory utilization (agent-based) | Cloud auto-scaling | Complex |

💡 Which Algorithm to Start With

Start with Round Robin if your servers are identical (same CPU, RAM, config). Switch to Least Connections if some requests take longer than others (API calls, database-heavy pages). Use IP Hash only if your application requires session stickiness and you can't use centralized sessions (Redis).

Types of Load Balancers

Software Load Balancers

Software running on a standard server. Most cost-effective option. Nginx and HAProxy can handle 100,000+ concurrent connections on a $12/month VPS. Full control over configuration. Best for: most web applications.

Examples: Nginx, HAProxy, Traefik, Caddy, Envoy

Cloud-Managed Load Balancers

Fully managed by the cloud provider. No server to maintain, automatic scaling, built-in health checks, and integrated with auto-scaling groups. Higher cost but zero operational overhead. Best for: cloud-native applications.

Examples: AWS ALB/NLB, GCP Load Balancer, Azure LB, DigitalOcean LB

DNS-Based Load Balancing

Distributes traffic at the DNS level by returning different IP addresses for each query. Enables global traffic distribution and geographic routing. Simplest to set up but least granular (DNS caching limits responsiveness). Best for: multi-region distribution.

Examples: Cloudflare LB, Route 53, NS1

Hardware Load Balancers

Dedicated physical appliances with specialized chips for maximum throughput. Handle millions of connections with sub-millisecond latency. Cost: $10,000-100,000+. Best for: enterprise data centers, financial institutions. Most sites will never need these.

Examples: F5 BIG-IP, Citrix ADC, A10 Networks

Layer 4 vs Layer 7 Load Balancing

| Feature | Layer 4 (Transport) | Layer 7 (Application) |

|---|---|---|

| Routes based on | IP address + TCP/UDP port | HTTP headers, URL path, cookies, hostname |

| Inspects content? | ❌ No (just TCP packets) | ✅ Yes (reads HTTP request) |

| SSL termination | Pass-through (backend decrypts) | ✅ Terminates at LB (offloads SSL) |

| URL-based routing | ❌ Not possible | ✅ /api → Server A, /static → Server B |

| Host-based routing | ❌ Not possible | ✅ app.com → Pool A, api.com → Pool B |

| Performance | ⭐ Faster (no content parsing) | Slightly slower (parses HTTP) |

| Use case | TCP/UDP services, databases, gaming | Web apps, APIs, microservices |

| AWS equivalent | NLB (Network Load Balancer) | ALB (Application Load Balancer) |

| Nginx equivalent | stream { } block | http { } + upstream block |

For web hosting: Use Layer 7 for almost all web applications. It gives you URL-based routing, SSL termination, header manipulation, and HTTP-aware health checks. Only use Layer 4 for non-HTTP services like databases, game servers, or raw TCP/UDP applications.

Best Load Balancing Providers

| Provider | Type | Price | SSL Term. | Health Checks | Best For |

|---|---|---|---|---|---|

| Nginx (self-hosted) | Software L7 | $0 (+ VPS) | ✅ | ✅ Basic | Most web apps |

| HAProxy (self-hosted) | Software L4/L7 | $0 (+ VPS) | ✅ | ✅ Advanced | High performance |

| Cloudflare LB | DNS + Proxy | $5/mo + $5/origin | ✅ | ✅ Global | Best value managed |

| DigitalOcean LB | Cloud L4/L7 | $12/mo flat | ✅ (Let's Encrypt) | ✅ | DO ecosystem |

| AWS ALB | Cloud L7 | ~$16/mo + usage | ✅ (ACM free) | ✅ Advanced | AWS ecosystem |

| AWS NLB | Cloud L4 | ~$16/mo + usage | ✅ | ✅ | TCP/UDP, ultra-low latency |

| GCP Load Balancer | Cloud L7 | ~$18/mo + usage | ✅ | ✅ Global | Global Anycast |

| Traefik | Software L7 | $0 (open source) | ✅ (auto LE) | ✅ | Docker/Kubernetes |

| Caddy | Software L7 | $0 (open source) | ✅ (auto HTTPS) | ✅ Basic | Simplest config |

Setup Guides

Nginx Load Balancer (Most Common)

upstream backend {

# Algorithm: least_conn, ip_hash, or default (round robin)

least_conn;

server 10.0.0.1:80 weight=3; # Higher capacity server

server 10.0.0.2:80 weight=2;

server 10.0.0.3:80 weight=1 backup; # Only used if others fail

}

server {

listen 80;

server_name yoursite.com;

location / {

proxy_pass http://backend;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_set_header X-Forwarded-Proto $scheme;

# Timeouts

proxy_connect_timeout 5s;

proxy_read_timeout 60s;

# Health check (passive)

proxy_next_upstream error timeout http_500 http_502 http_503;

}

}HAProxy Configuration

frontend http_front

bind *:80

bind *:443 ssl crt /etc/ssl/yoursite.pem

redirect scheme https if !{ ssl_fc }

default_backend http_back

backend http_back

balance leastconn

option httpchk GET /health

http-check expect status 200

server web1 10.0.0.1:80 check weight 3

server web2 10.0.0.2:80 check weight 2

server web3 10.0.0.3:80 check weight 1 backupCloudflare Load Balancing (Managed)

Dashboard → Traffic → Load Balancing → Create Pool. Add your server IPs (2+ servers). Name the pool.

Set HTTP health check to your /health endpoint. Interval: 60s. Threshold: 2 consecutive failures before marking unhealthy.

Create a new Load Balancer. Assign your pool. Set fallback pool (if any). Choose traffic steering (Geo, Random, Off).

Cloudflare automatically creates a DNS record pointing to the load balancer. Proxied through Cloudflare's network (orange cloud).

Health Checks & Failover

Health checks are the most important feature of any load balancer. They detect when a backend server is unhealthy and automatically remove it from the pool—preventing users from being sent to a broken server.

| Check Type | How It Works | Detects | Recommended |

|---|---|---|---|

| TCP Connect | Attempts TCP connection to port. Success = healthy. | Server down, port closed | Minimum baseline |

| HTTP GET | Sends HTTP request to /health endpoint. 200 = healthy. | App crashes, errors | ⭐ Best for web apps |

| HTTP Content | Checks response body for expected string (e.g., 'OK'). | Partial failures, bad deploys | ⭐⭐ Most thorough |

| Database query | Health endpoint queries DB, returns OK. Detects DB issues. | Database failures | Critical for DB-driven apps |

| Custom script | Runs a script that checks CPU, disk, memory, queue depth. | Resource exhaustion | Advanced setups |

🏥 Recommended Health Endpoint

Create a /health endpoint in your app that checks:

- ✅ App process is running and responding

- ✅ Database connection is active (run a simple SELECT 1)

- ✅ Disk space is above threshold (>10% free)

- ✅ Return 200 OK with JSON:

{"status":"healthy","db":"ok","uptime":"12h"}

SSL Termination

SSL termination means the load balancer handles HTTPS encryption/decryption instead of the backend servers. This is the standard approach and has significant benefits:

✅ Terminate at Load Balancer (recommended)

- • One SSL cert managed in one place

- • Backends talk HTTP (simpler config)

- • Reduces CPU load on backends by 10-20%

- • Easier cert renewal (one location)

- • Load balancer can inspect HTTP headers

- • Traffic between LB→backend is on private network

🔒 End-to-End Encryption (SSL passthrough)

- • Full encryption from user to backend

- • Required for PCI-DSS compliance

- • Load balancer can't read HTTP content

- • Each backend needs its own SSL cert

- • More complex to manage

- • Only needed for strict compliance requirements

Common Mistakes to Avoid

Not using health checks (or using TCP-only)

→ Always use HTTP health checks with a proper /health endpoint that verifies your app and database are working. TCP checks only confirm the port is open—your app could be crashing on every request.

Storing sessions in server memory

→ With load balancing, a user might hit Server A on one request and Server B on the next. If sessions are stored in memory, they'll be logged out. Use centralized session storage: Redis, database, or JWT tokens.

Not forwarding real client IPs

→ Without X-Forwarded-For and X-Real-IP headers, your backend sees the load balancer's IP for every request. This breaks rate limiting, analytics, geo-targeting, and security logging. Always configure proxy headers.

Single load balancer as SPOF

→ If the load balancer itself goes down, everything goes down. Use cloud-managed LBs (inherently redundant), Keepalived with a floating IP for self-hosted setups, or DNS-based failover between two LB instances.

Over-engineering for small traffic

→ If you have 5,000 monthly visitors, you don't need a load balancer—you need a CDN (Cloudflare free). Load balancing adds complexity. Only add it when a single server genuinely can't handle the load.

Not testing failover scenarios

→ Regularly stop a backend server and verify: Does the LB detect it within your timeout? Do users experience errors? Does traffic reroute smoothly? Does the server rejoin automatically when restarted?

Frequently Asked Questions

When do I need a load balancer?

What's the difference between a load balancer and a CDN?

Can I use Nginx as a load balancer?

How much does load balancing cost?

What happens if the load balancer itself fails?

Need Hosting That Scales?

Find the right cloud or VPS hosting with built-in load balancing, auto-scaling, and high availability features for your growing site.

Find Scalable Hosting